Jeremy Wilson

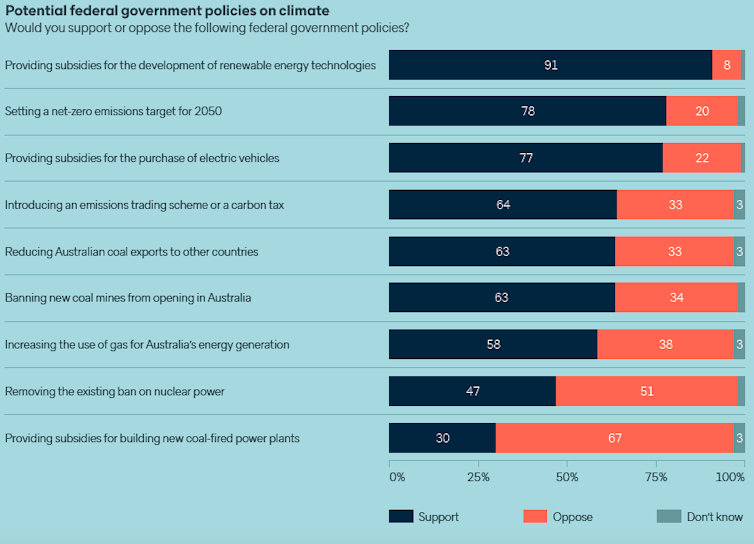

Mark Harvey, The University of Western AustraliaAfter a century of scientific confusion, we can now officially add five new species to Australia’s long list of trapdoor spiders — secretive, burrowing relatives of tarantulas.

It all started in 1918, when a species known as Euoplos variabilis, was first described. Since then, this species has been considered widespread throughout south-eastern Queensland.

However, in new research, fellow arachnologists from the Queensland Museum studied the physical appearance and DNA of these trapdoor spiders. They revealed this “widespread” species is actually several.

Many trapdoor spider species are short-range endemics, meaning they only occur in one small area. This makes them especially vulnerable to threats such as habitat destruction and degradation, which is why the discovery and description of these new species from Queensland is so important — they can now be protected from future threats.

Meet Australia’s trapdoor spiders

To many people, Australia’s spider diversity is a source of fear. To arachnologists like myself, it’s a goldmine.

Weird and wonderful new species are everywhere. While new discoveries are relatively common, it’s likely most Australian spider species are still yet to be named by science.

Michael Rix

Trapdoor spiders live in burrows that usually have a hinged door at the entrance that the spider constructs using silk, soil or other material from the surrounding area. Their burrows can be camouflaged, but to a trained eye they’re easily found on the soil embankments beside walking tracks in eastern Australian rainforests.

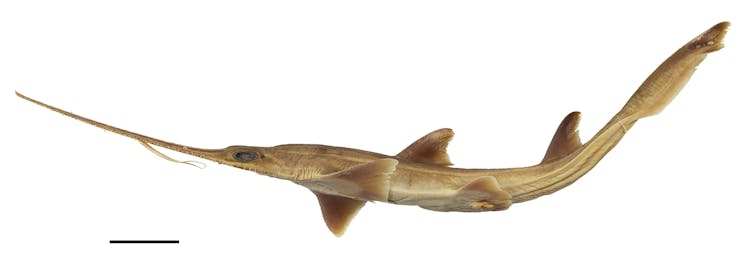

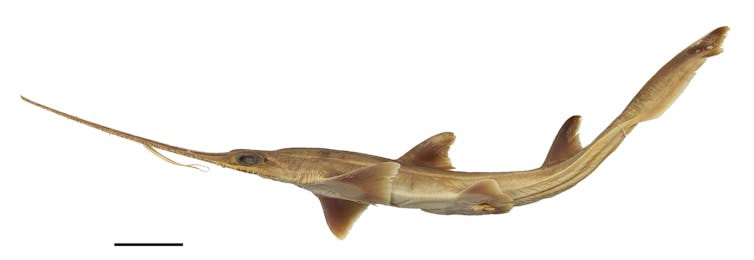

In the past few years, I’ve been part of a team studying the spiny trapdoor spiders — a group of relatively large (up to about seven centimetres long, including legs) but highly secretive spiders found throughout Australia. They belong to an ancient group called the Mygalomorphae that, alongside tarantulas, includes the infamous Australian funnel-web spiders.

Jeremy Wilson

Like other trapdoor spiders, adult male and female spiny trapdoor spiders look shockingly different. When males reach adulthood, their physical appearance changes: their legs get longer and thinner, and their first appendages (called “pedipalps”) develop into structures used for mating. In contrast, adult females remain short-legged and robust.

Male trapdoor spiders undergo this dramatic change because as adults they must leave their burrow and search for females to breed.

Their long legs presumably help them run faster and further in search of females, and also allow them to keep the vulnerable parts of their body out of harm’s way once they meet the (usually larger) female, who isn’t always happy to see them.

The mystery of the trapdoor spider from Mount Tamborine

This striking differences in appearance between male and female spiny trapdoor spiders (“sexual dimorphism”) was at the heart of the mystery regarding the true identity of Euoplos variabilis.

Jeremy Wilson

When the species was first described in 1918, it was based only on female spiders, which were red-brown, large and lived in the rainforest of Mount Tamborine, just south of Brisbane.

In 1985, a male spider, also from Mount Tamborine, was finally linked to the original females. Matching male and female trapdoor spiders of the same species can be difficult because they look so different.

This all changed when the Queensland Museum team began researching the spiny trapdoor spiders of eastern Australia in 2015. When they looked in the museum’s natural history collection, it seemed like males of the Mount Tamborine trapdoor spider were widespread, spanning Brisbane to the Sunshine Coast.

But strangely, they found females from different locations looked different.

While females from the Mount Tamborine rainforest were large and red-brown, those from the lowlands of north Brisbane were small and tan. And in the rainforest of the D’Aguilar Range, north of Brisbane, the females were even bigger, with a bright orange carapace and red legs.

Could these really all be the same species?

Michael Rix

This mystery was solved in two steps

First, in 2018, the museum’s arachnologists discovered the seemingly widespread males were actually members of a completely different group of trapdoor spiders, which also occurs in eastern Australia. In other words, there had been a male/female mismatch!

Then, by collecting fresh trapdoor spiders around south-east Queensland and studying their DNA, they discovered the Mount Tamborine trapdoor spider actually doesn’t occur in Brisbane at all. In fact, it’s found only in the mountain ranges bordering New South Wales, with Mount Tamborine being its the most northerly location.

Surprisingly, the female spiders found in Brisbane, the D’Aguilar range, and in various other areas, turned out to be several completely different species, new to science.

Read more:

Ever wondered who’d win in a fight between a scorpion and tarantula? A venom scientist explains

These species can be distinguished by subtle differences in size and colour, and by differences in their DNA. The different species seem to be adapted to different habitats, at different elevations.

So, alongside Euoplos variabilis, the original Mount Tamborine trapdoor spider, the new confirmed species are:

- Euoplos raveni and Euoplos schmidti, both from the lowland forests of the Brisbane Valley, south of the Brisbane River

- Euoplos regalis from the upland rainforest of the D’Aguilar Range

- Euoplos jayneae from the the lowland forests of the Sunshine Coast hinterlands

- Euoplos booloumba from the upland rainforest of the Conondales Range

These five new species put the total number of known spiny trapdoor spider species to 258.

Shutterstock

What happens now?

And so, the mystery was solved. Another small fraction of Australia’s beautiful biodiversity is known to science and can be preserved. But the story isn’t over just yet.

To properly conserve these species, we need to understand more about how they live. This is why the research team and I are undertaking a long-term study on one of these new species, Euoplos grandis from the Darling Downs. We hope to learn the intricacies of their lives and to track whether populations are declining from threats such as habitat destruction.

Read more:

Photos from the field: zooming in on Australia’s hidden world of exquisite mites, snails and beetles

We’re also continuing our mission to discover and describe new species of trapdoor spider, not just from Queensland, but from all around Australia.

The story of the Mount Tamborine trapdoor spider exemplifies the type of detective work Australian scientists undertake on all types of animal groups. But when it comes to invertebrates, we’ve barely scratched the surface, with new species of bugs, spiders, worms and more waiting to be discovered.

Working on discovering these invertebrates comes with a sense of urgency. These species need a name and formal protection, before it’s too late.

Jeremy Wilson and Michael Rix from Queensland Museum were co-authors on this article![]()

Mark Harvey, Curator of Arachnology at the Western Australian Museum, Adjunct Professor, The University of Western Australia

This article is republished from The Conversation under a Creative Commons license. Read the original article.

You must be logged in to post a comment.